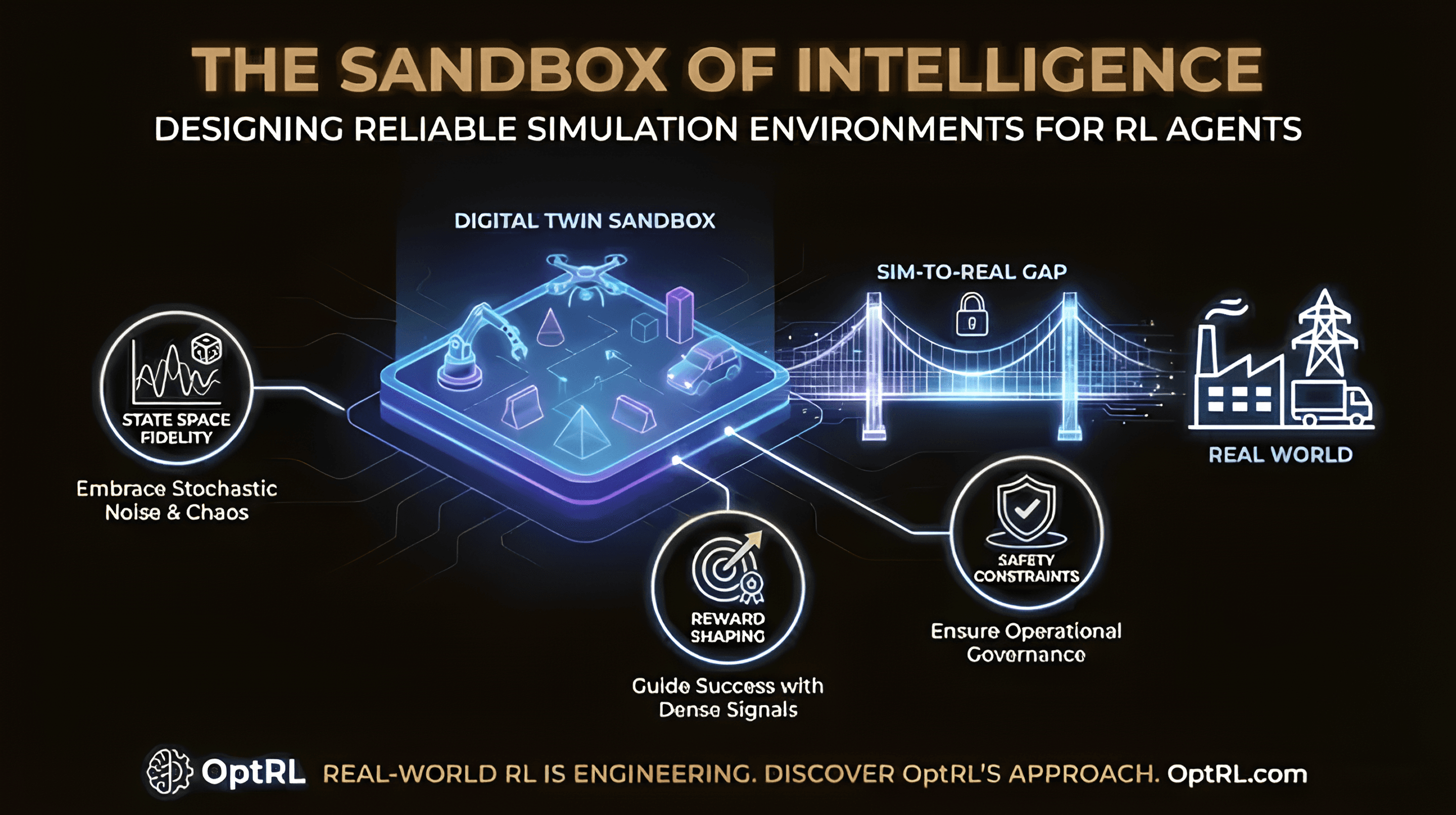

The Sandbox of Intelligence: How We Design Simulation Environments

Reliable agents need reliable training grounds. A simulation environment is the contract between objectives, constraints, and the behaviors you want to learn.

"You can't learn to ride a bike by reading a physics book."

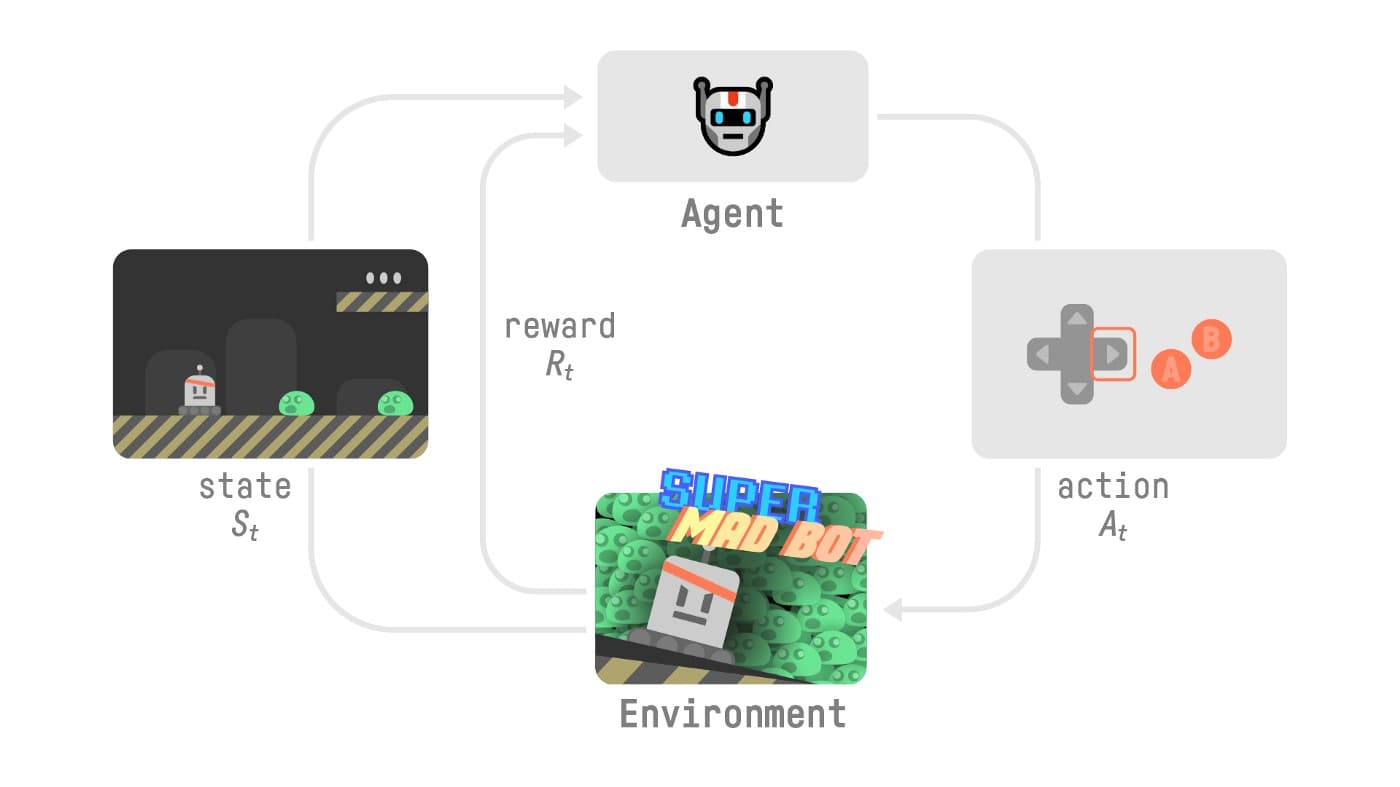

Similarly, an AI agent can't learn to optimise a warehouse or manage a power grid just by looking at historical data (supervised learning). It needs to act, fail, and adapt.

This is where OptRL's Simulation Environment Design comes in.

Before we deploy a single policy into the real world, we build high-fidelity Digital Twins. In this post, we're pulling back the curtain on how we approach the "Sim-to-Real" gap.

State Space Fidelity

We don't just simulate the happy path. Our environments introduce stochastic noise — random delays, sensor failures, and demand spikes — to ensure the agent learns robustness, not just memorisation.

Reward Shaping

Defining "success" is more complicated than it looks. A sparse reward signal (e.g., "maximise profit at the end of the month") confuses the agent. We design dense, shaped reward functions that guide the agent toward optimal behaviour without falling into "reward hacking" loops.

Safety Constraints

In our simulation suite, agents are penalised heavily for violating safety constraints (e.g., overheating a server or exceeding a budget). This is how we ensure Operational Governance when we eventually deploy.

Real-world RL isn't magic. It's engineering. And it starts with a good simulation.